Introduction

It seems like everybody is talking about AI these days. Whether it’s regaling it or prophesying the end of the human race, AI is on people’s minds and it’s not going away.

AI is not new and has been around for a while. What is recent is its accessibility to regular folk like you and me via the availability of generative AI tools such as (the already prolific) ChatGPT.

Given its ease of use and impressive output, it’s easy to get blown-away. It is, however, important to be mindful of the potential risks and pitfalls of using such tools.

We are already seeing news headlines around “hallucinations” (where AI is generating false information) and infringements of intellectual property rights. We also know there are issues around ethics, data protection and confidentiality, just to name a few.

As the technology is still relatively new, we haven’t yet seen the full extent of its potential problems and the guardrails aren’t quite there since law and regulation are still playing catch-up with the technology that is moving at lightning speed.

Despite this, the value of AI as a productivity tool (at the very least) cannot be underestimated and failure to adopt is not an option for most businesses if they wish to remain competitive and retain the best talent. As such, instead of resisting generative AI, businesses could be thinking of pragmatic ways to manage the use of such tools to help minimise legal and practical risks.

A good starting point is to provide guidance to employees on use of generative AI with an AI policy, and here are a number of useful points for inclusion in such a policy:

-

Set the scene

Explain the company’s approach to AI and the extent to which the company has decided in use it in the business. Providing context to your teams will help.

-

Highlight key principles

Outline the principles that users should observe when using AI, the key ones being:

- Confidentiality

- Protection of intellectual property rights

- Protection of data privacy and security

- Compliance with legal and regulatory requirements

-

Provide guidelines for use

State how employees may use generative AI, for example:

- Asking users to first consider the nature of the data fed into the tool, to determine if it is too sensitive to share and whether such data should be anonymised

- Ensuring that AI-generated material undergoes careful human review before use for accuracy, completeness, and protection of both third-party rights and the company’s proprietary information

- Specifying whether tools must be used only with accounts created using company email addresses/credentials

- Stating that usage of AI must comply with business policies, codes of conduct and obligations in employment or engagement contracts

- Undergoing an approval process for use of any new AI tools

- Informing users that they need to opt-out (where possible) from allowing the AI tools to use input data for machine learning purposes

-

Prohibitions and restrictions

Be clear in the policy about any prohibitions or restrictions to be abided by, for example:

-

- Not to use certain types of data such as customer data (whether personal or not)

- Not to feed in company or customer data that is confidential or restricted information

- Not to use personally identifiable information (for example, people’s names, addresses, emails)

-

-

General

As with all policies, include general policy points such as:

- Scope: set out who the policy applies to – whether it also applies to suppliers or only internally to employees and consultants

- Ownership and enforcement: identify who in the business is responsible for enforcing the policy and approving any policy exceptions

- Breaches: specify the process for reporting breaches

- Violations: outline the potential outcomes of violations, for example, disciplinary actions, up to and including termination of employment or legal action where appropriate

Of course, an AI policy should be the articulation of your business’s stance on AI. So, before implementing one, you should first embark on a journey to consider how you are willing to incorporate the use of AI within your business, weigh out the risks and benefits and fully understand the impact of doing so.

If you’d like support preparing an AI Policy, we’re here to help.

Written Natasha Aziz

Principal at My Inhouse Lawyer

One of our values (Growth) is, in many ways, all about cultivating a growth mindset. We are passionate about learning, improving and evolving. We learn from each other, use the best know-how tools in the market and constantly look for ways to simplify. Lawskool is our way of sharing with you. It isn’t intended to be legal advice, rather to enlighten you to make smart business decisions day to day with the benefit of some of our insight. We hope you enjoy the experience. There are some really good ideas and tips coming from some of the best inhouse lawyers. Easy to read and practical. If there’s something you’d like us to write about or some feedback you wish to share, feel free to drop us a note. Equally, if it’s legal advice you’re after, then just give us a call on 0207 939 3959.

Like what you see? Book a discovery call

How it works

1

You

It starts with a conversation about you. What you want and the experience you’re looking for

2

Us

We design something that works for you whether it’s monthly, flex, solo, multi-team or includes legal tech

3

Together

We use Workplans to map out the work to be done and when. We are responsive and transparent

Like to know more? Book a discovery call

Freedom to choose & change

MONTHLY

A responsive inhouse experience delivered via a rolling monthly engagement that can be scaled up or down by you. Monthly Workplans capture scope, timings and budget for transparency and control

FLEX

A more reactive yet still responsive inhouse experience for legal and compliance needs as they arise. Our Workplans capture scope, timings and budget putting you in control

PROJECT

For those one-off projects such as M&A or compliance yet delivered the My Inhouse Lawyer way. We agree scope, timings and budget before each piece of work begins

Ready to get started? Book a discovery call

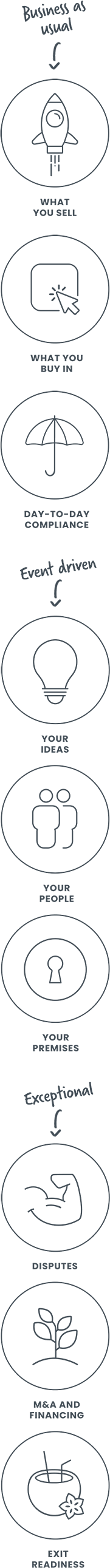

How we can help